The Role Bridging Problem

An observation on functional correctness without domain quality.

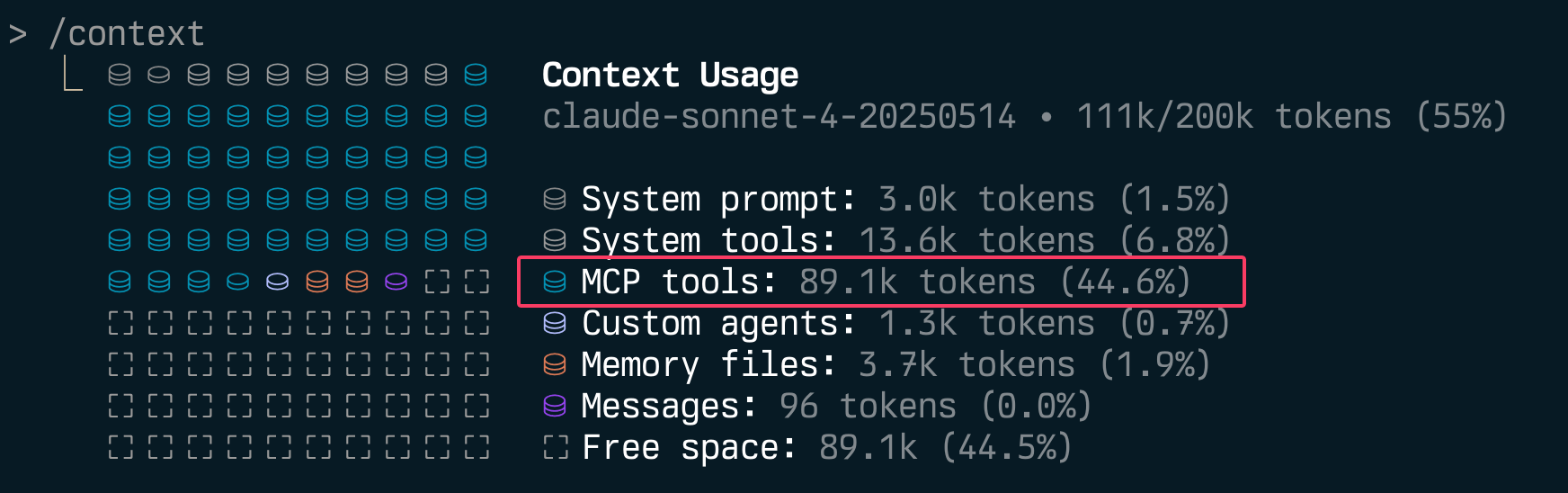

Stop Polluting Context - Let Users Disable Individual MCP Tools

If you’re building MCP servers, you should be adding the ability to disable individual tools.

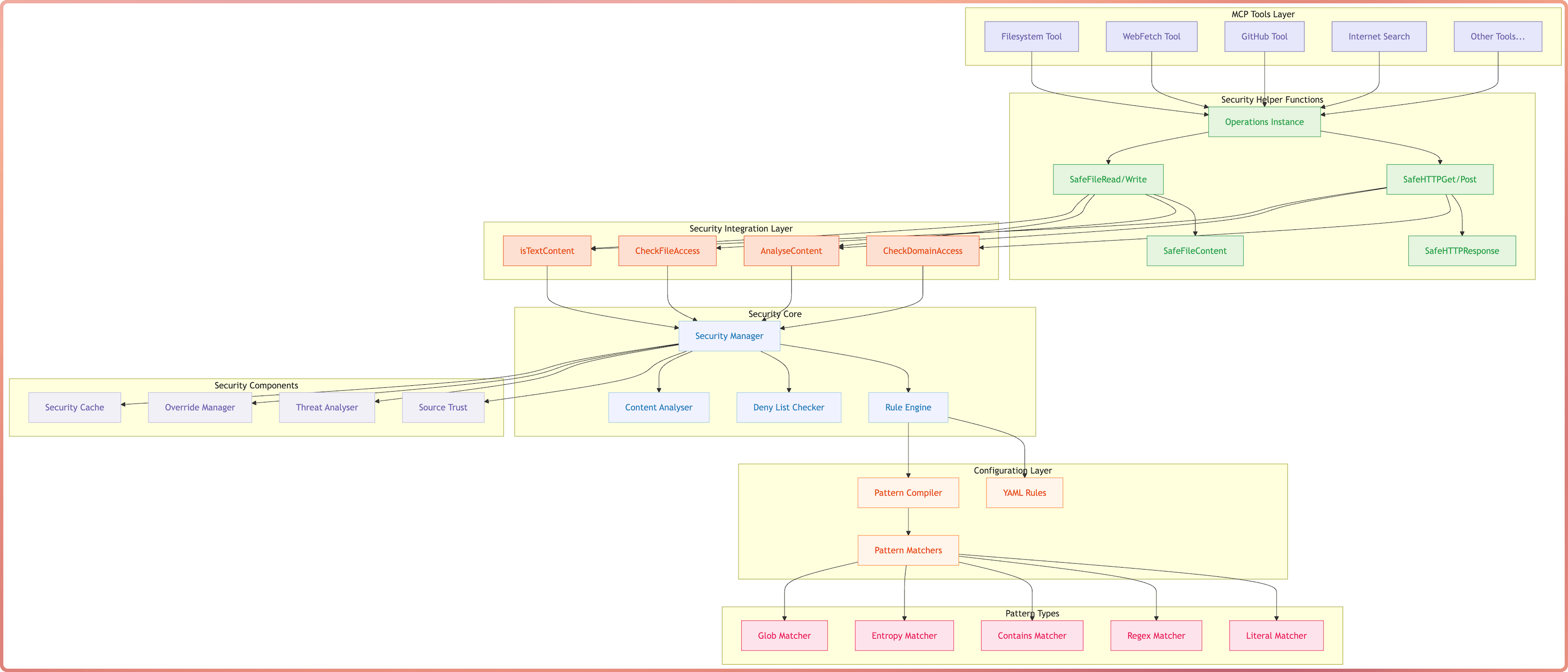

MCP DevTools

MCP DevTools - The one tool that replaced the 10-15 odd NodeJS/Python/Rust MCP servers I had running at any given to for agentic coding tools with a single server that provides tools I consider useful for agents when coding. The Problem The MCP ecosystem has grown rapidly, but I found myself managing many separate servers, each often running multiple times for every MCP client I had running, not to mention the ever growing memory and CPU consumption of the many NodeJS or Python processes. ...

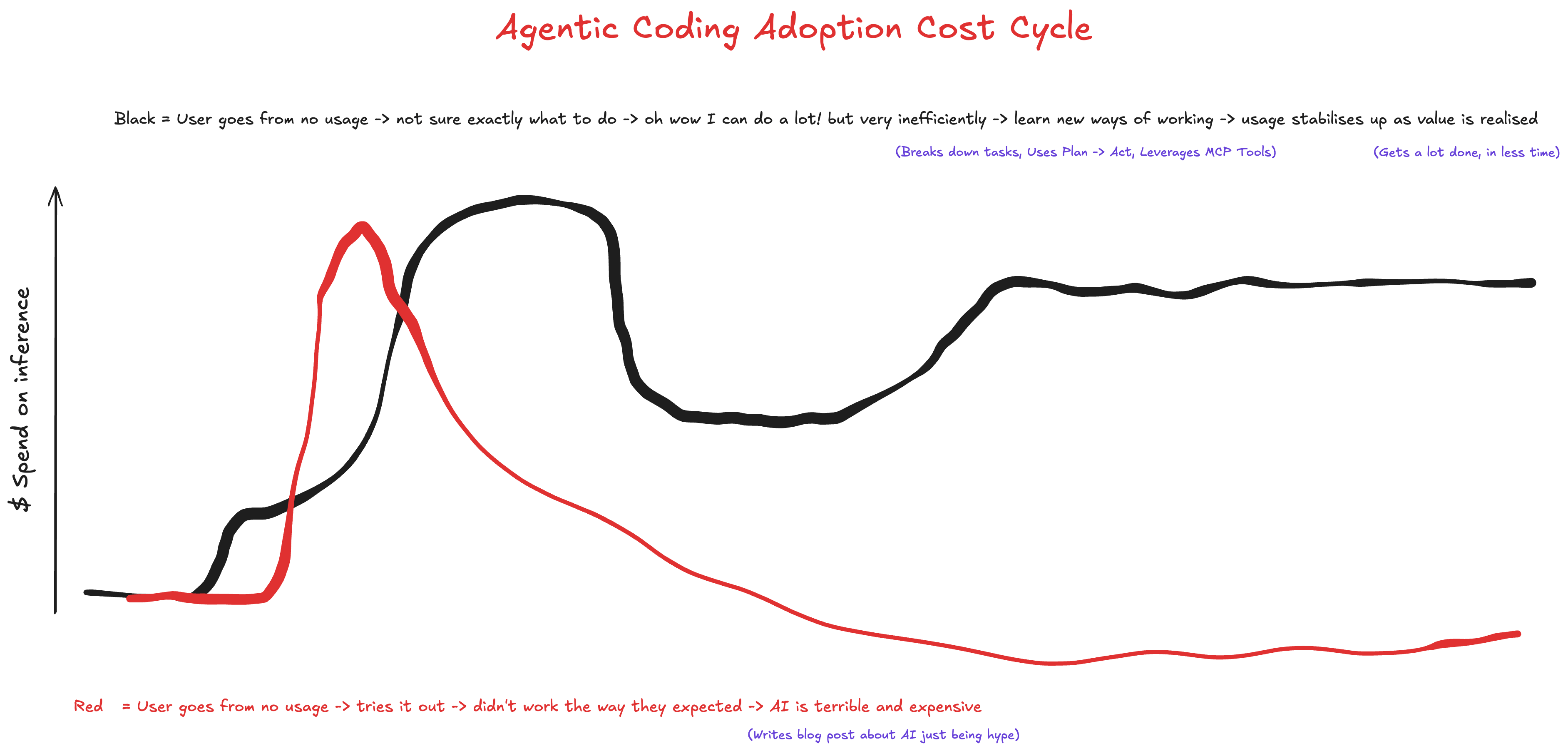

Agentic Coding Adoption Cost Cycle

Agentic Coding Workflow & Cline Demo

Square Peg hosted event on June 20, 2025 where I demonstrated a basic version of my daily Agentic Coding workflow using Cline and MCP tools. What does it take to write enterprise-grade code in the AI-native era? Join Square Peg investors James Tynan and Grace Dalla-Bona for a live demo and Q&A session with three leading AI-native developers - Grant Gurvis, Listiarso Wastuargo, and Sam McLeod - and get a behind-the-curtain look at the workflows that enable them to ship faster, smarter, and cleaner code using tools like Cursor, Cline, and smolagents. ...

Vibe Coding vs Agentic Coding

Picture this: A business leader overhears their engineering team discussing “vibe coding” and immediately imagines developers throwing prompts at ChatGPT until something works, shipping whatever emerges to production. The term alone—“vibe coding”—conjures images of seat-of-the-pants development that would make any CTO break out in a cold sweat. This misunderstanding is creating a real problem. Whilst vibe coding represents genuine creative exploration that has its place, the unfortunate terminology is causing some business leaders to conflate all AI-assisted / accelerated development with haphazard experimentation. I fear that engineers using sophisticated AI coding agents be it with advanced agentic coding tools like Cline to deliver production-quality solutions are finding their approaches questioned or dismissed entirely. ...

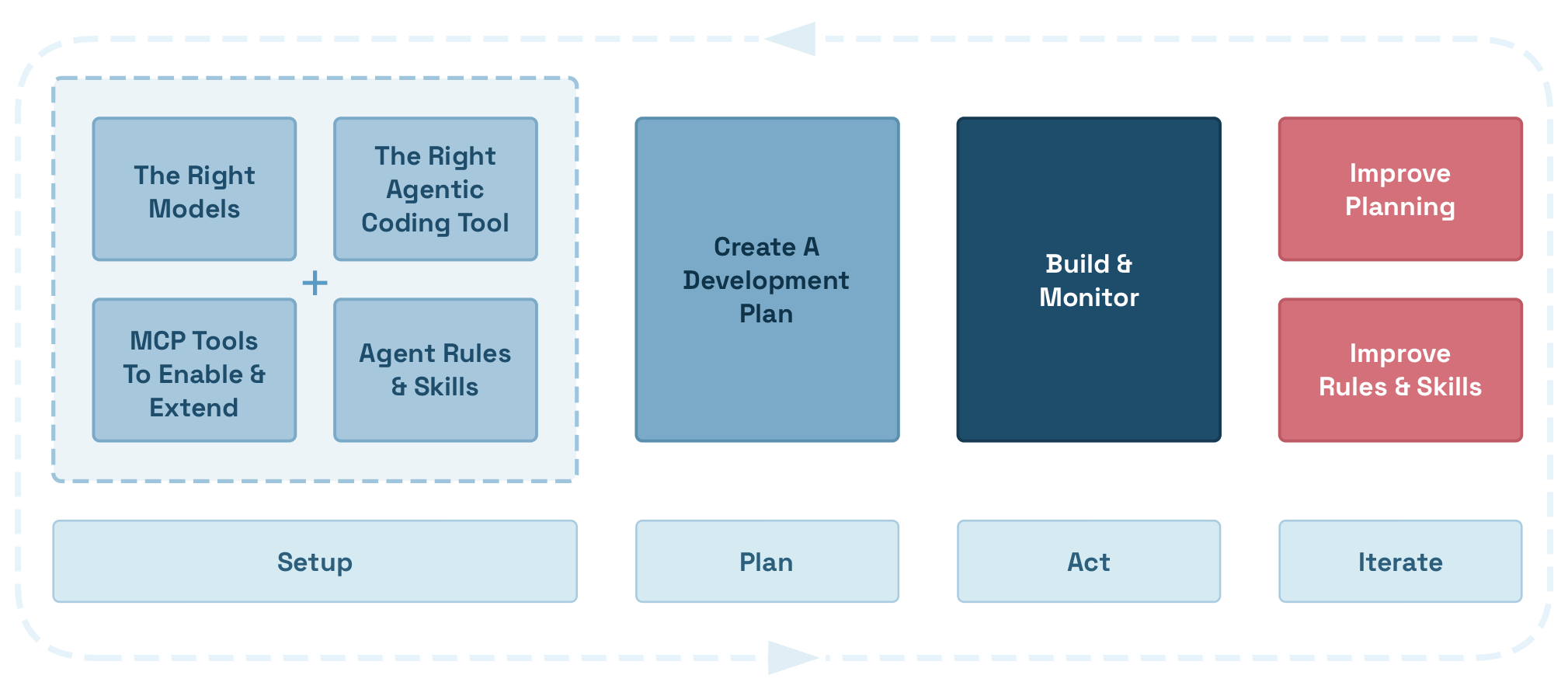

My Plan, Document, Act, Review flow for Agentic Software Development

I follow a simple, yet effective flow for agentic coding that helps me to efficiently develop software using AI coding agents while keeping them on track, focused on the task at hand and ensuring they have access to the right tools and information. The flow is simple: Setup -> Plan -> Act -> Review and Iterate. Workflow Quickstart Outline of Setup -> Plan -> Act -> Review & Iterate workflow: ...

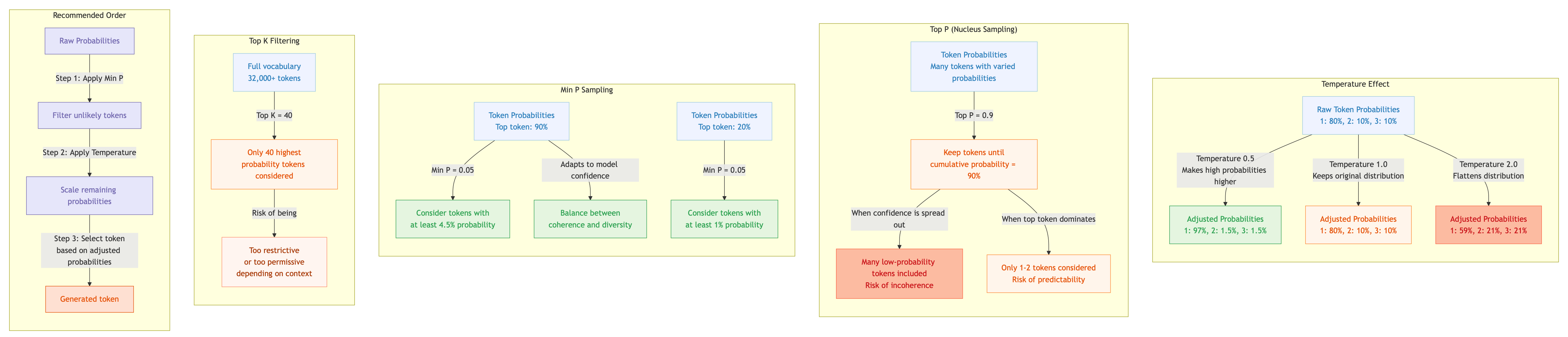

LLM Sampling Parameters Guide

Large Language Models don’t generate text deterministically - they use probabilistic sampling to select the next token based on prediction probabilities. How these probabilities are filtered and adjusted before sampling significantly impacts output quality. This guide explains the key sampling parameters, how they interact, and provides recommended settings for different use cases. Framework Reference Last updated: November 2025 Parameter Comparison Parameter llama.cpp Default Ollama Default MLX Temperature --temp 0.8 temperature 0.8 --temp Top P --top-p 0.9 top_p 0.9 --top-p Min P --min-p 0.1 min_p 0.0 --min-p Top K --top-k 40 top_k 40 --top-k Repeat Penalty --repeat-penalty 1.0 repeat_penalty 1.1 Unsupported Repeat Last N --repeat-last-n 64 repeat_last_n 64 Unsupported Presence Penalty --presence-penalty 0.0 presence_penalty - Unsupported Frequency Penalty --frequency-penalty 0.0 frequency_penalty - Unsupported Mirostat --mirostat 0 mirostat 0 Unsupported Mirostat Tau --mirostat-ent 5.0 mirostat_tau 5.0 Unsupported Mirostat Eta --mirostat-lr 0.1 mirostat_eta 0.1 Unsupported Top N Sigma --top-nsigma -1.0 Unsupported - Unsupported Typical P --typical 1.0 typical_p 1.0 Unsupported XTC Probability --xtc-probability 0.0 Unsupported - --xtc-probability XTC Threshold --xtc-threshold 0.1 Unsupported - --xtc-threshold DRY Multiplier --dry-multiplier 0.0 Unsupported - Unsupported DRY Base --dry-base 1.75 Unsupported - Unsupported Dynamic Temp --dynatemp-range 0.0 Unsupported - Unsupported Seed --seed -1 seed 0 - Context Size --ctx-size 2048 num_ctx 2048 - Max Tokens --predict -1 num_predict -1 - Notable Default Differences Parameter llama.cpp Ollama Note min_p 0.1 0.0 Ollama disables Min P by default repeat_penalty 1.0 1.1 Ollama applies penalty by default seed -1 (random) 0 Different random behaviour Feature Support Feature llama.cpp Ollama MLX Core (temp, top_p, top_k, min_p) ✓ ✓ ✓ Repetition penalties ✓ ✓ ✗ Presence/frequency penalties ✓ ✓ ✗ Mirostat ✓ ✓ ✗ Advanced (DRY, XTC, typical, dynatemp) ✓ ✗ Partial Custom sampler ordering ✓ ✗ ✗ Core Sampling Parameters Temperature Controls the randomness of token selection by modifying the probability distribution before sampling. ...

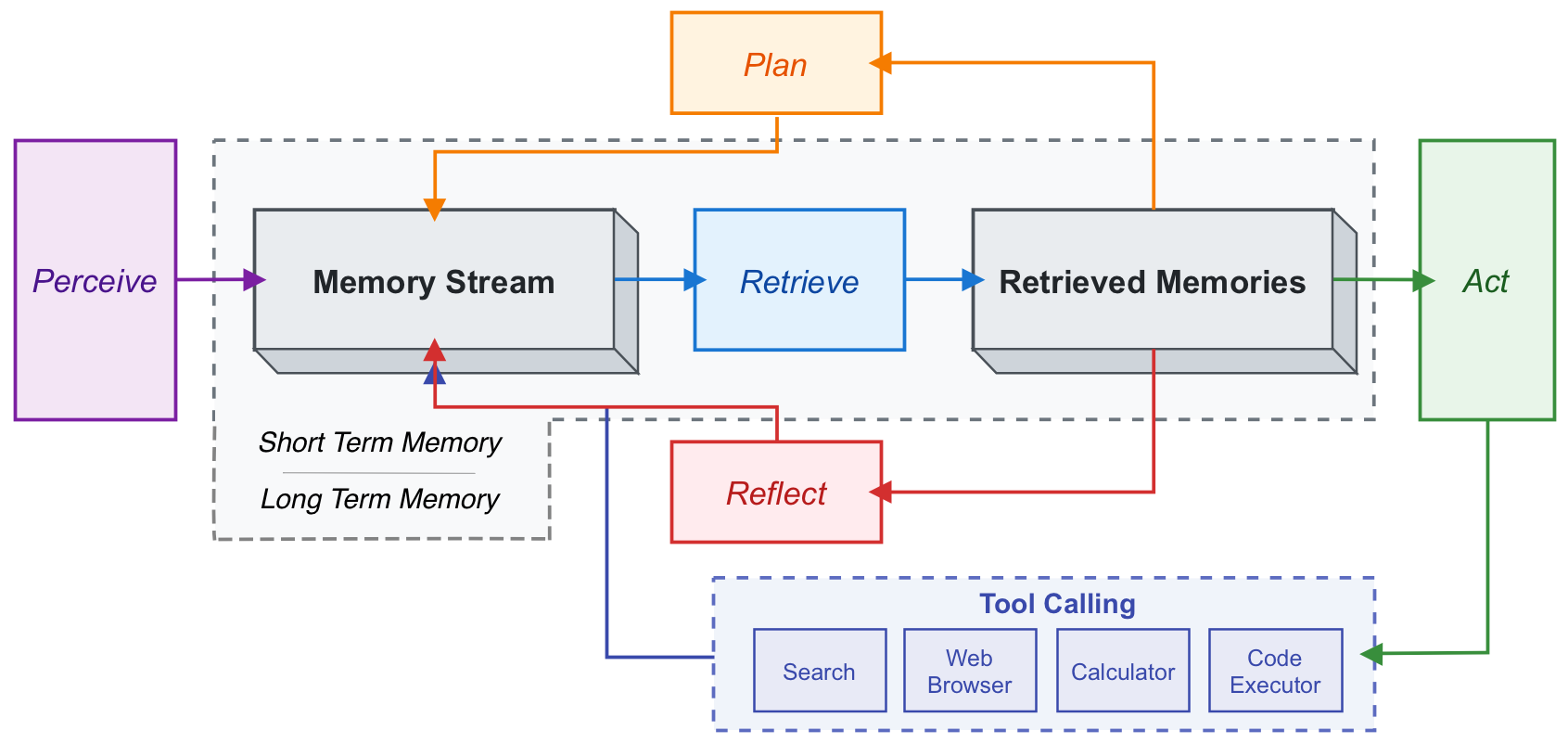

Getting Started with Agentic Systems - Developer Learning Paths

As agentic systems become increasingly central to modern software development, many engineers are looking to build practical skills but don’t know where to start. This guide provides a short list of pre-reading/watching and hands-on training resources to help you get started with developing with AI agents. The focus is on practical implementation for tools and methods you’re likely to use in the workplace, so you can quickly gain experience and confidence in building AI powered and agentic systems. ...

The Cost of Agentic Coding

Don’t ask yourself “What if my high performing engineers spent $2k/month on agentic coding?” …ask yourself why they (and others) aren’t - and what opportunities they’re missing as a result. ...